factory stats

- Description:

Specifies some utility for statistics.

- These functions are accessible via the

aidesys::prefix.

- These functions are accessible via the

Methods

(static) random(whatopt, degreeopt) → {double}

- Description:

Returns a deterministic pseudo-random number.

- This function is accessible via the

aidesys::prefix.

- This function is accessible via the

Parameters:

| Name | Type | Attributes | Default | Description |

|---|---|---|---|---|

what |

char |

<optional> |

'i'

|

Random distribution.

|

degree |

uint |

<optional> |

1

|

The Gamma distribution degree, usually 1, 2, 5 or 10. |

Returns:

A random number.

- Type

- double

(static) getStat(data, length, hsizeopt, histoopt, maskopt) → {string}

- Description:

Computes usual 1D statistics returned as a parsable weak json string.

0X1 As a function of the sample momenta:

- "count": the number of observed values.

- "min": the observed minimal value.

- "max": the observed maximal value.

- "mean": the mean value.

- "stdev": the standard-deviation. (2nd order).

- Returns an unbiased estimator of the theoretical standard-deviation

E[(x-m)^2]^(1/2)(Any standard-deviation lower than DBL_EPSILON (1e-15) is set to 0).

- Returns an unbiased estimator of the theoretical standard-deviation

- _"skew" : the skewness (3rd order).

- Returns an estimator of the theoretical skewness skewness

E[(x - m)^3 / stdev^3](Skewness is zero for symmetric distributions).

- Returns an estimator of the theoretical skewness skewness

- _"kurt" : the kurtosis (4th order).

- An estimator of the theoretical kurtosis kurtosis

E[(x - m)^4 / stdev^4] - 3(Kurtosis is equal to 0 for the Gaussian distribution).

- An estimator of the theoretical kurtosis kurtosis

0X2 As a function of the sample momenta, with respect to usual distributions:

- "gamma-degree": the degree of a Gamma distribution, with the same mean and variance as the empirical distribution.

- Returns the degree

d >= 1or0if undefined, assuming that the probability distribution has the formp(t) = (t / tau)^(d - 1) exp(-t / tau) / (tau d!)e.g.,d = 1for a Poisson distribution.

- Returns the degree

- _"gamma-rate" : the rate of a Gamma distribution, with the same mean and variance as the empirical distribution.

- Returns the rate

tau >= 1or0if undefined, assuming a Gamma distribution as above.

- Returns the rate

- "uniform-entropy": the entropy of a uniform distribution with the same mean and variance.

- "normal-entropy": the entropy of a Gaussian distribution with the same mean and variance.

0X4 As a function of the histogram:

- "hsize": the histogram sampling size.

- "hsize_from_standard_deviation": the histogram sampling size, according to (Scott 1979).

- "hsize_from_inter_quartile_range: the histogram sampling size, according to (Izenman 1991).

- "mode": the mode value, i.e. the most frequent value, with 2nd order interpolation.

- _"median", _"lower-quartile", "upper-quartile", "inter-quartile-range": The median corresponds to the percentile for at 50%. The quartiles to the percentile at 25% and 75%. Values are calculated from the histogram with 1st order interpolation.

- "median", "lower-quartile", "upper-quartile", "inter-quartile-range": Here, values are calculated from the sorted data.

- "entropy": The distribution entropy, in bits.

- We use the approximation:

-sum_i p_i log_2(p_i) + log_2(epsilon),

wherep_iis the probability of thei-th box of sizeepsilon.

- We use the approximation:

0X8 As a function of the histogram, with respect to usual distributions:

- "uniform-divergence": The Kullback-Leibler divergence between the empirical distribution and a uniform distribution having the same mean and variance.

- "normal-divergence": The Kullback-Leibler divergence between the empirical distribution and a Gaussian distribution having the same mean and variance.

- "gamma-1-divergence": The Kullback-Leibler divergence between the empirical distribution and a Gamma distribution of degree

1having the same mean and variance. - "gamma-2-divergence": The Kullback-Leibler divergence between the empirical distribution and a Gamma distribution of degree

2having the same mean and variance. - "gamma-5-divergence": The Kullback-Leibler divergence between the empirical distribution and a Gamma distribution of degree

5having the same mean and variance. - "gamma-10-divergence": The Kullback-Leibler divergence between the empirical distribution and a Gamma distribution of degree

10having the same mean and variance. - "best-model": Using the following numerical code:

0: "normal",1,2,5,10: "gamma-1", "gamma-2", "gamma-5", "gamma-10",-1`: "uniform" as a fallback option.

Parameters:

| Name | Type | Attributes | Default | Description |

|---|---|---|---|---|

data |

Array | Vector | function | The 1D data.

|

||

length |

uint | The data length, if given as an array or density function. |

||

hsize |

uint |

<optional> |

0

|

An optional histogram size to compute histogram based values between min and max. If not specified but the mask includes estimation based on an histogram, an optimal size is calculated as the maximum between the (Scott 1979) and (Izenman 1991) estimation, with a fallback to 100, if estimations fails. |

histo |

Array |

<optional> |

An optional |

|

mask |

uint |

<optional> |

0xF

|

An optional mask to deselect some calculation:

|

Returns:

The 1D statistics parameters returned as a parsable JSON string.

- The following construct allows to extract a given parameter:

[getStatValue](.#getStatValue)(name, getStat(../..))

- Type

- string

(static) getStatValue(name, stat, The)

- Description:

Extract a getStat value from the JSON string.

Parameters:

| Name | Type | Description |

|---|---|---|

name |

string | The parameter name. |

stat |

string | The getStat string returned value. |

The |

double | related parameter value, or |

(static) getDivergence(data1, data2, hsize)

- Description:

Calculates the Kullback-Leibler divergence between this empirical distribution and another probality density.

- The Kullback–Leibler divergence

d(p||q) = >_w p ln(p/q), wherepis the empirical distribution andqthe model distribution corresponds to the average number of bits difference when codingpwithq.

- The Kullback–Leibler divergence

Parameters:

| Name | Type | Description |

|---|---|---|

data1 |

Histogram | Array | Vector | function | The 1D data empirical data.

|

data2 |

Histogram | Array | Vector | function | The 1D model distribution data.

|

hsize |

uint | The histogram size. |

Returns:

The Kullback-Leibler divergence in bits.

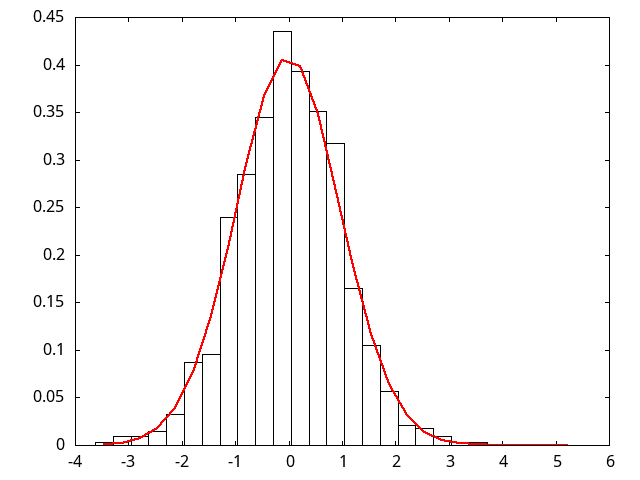

(static) plotHistogram(file, data, length, hsizeopt, model)

- Description:

Plots an histogram, as exemplified here:

Parameters:

| Name | Type | Attributes | Default | Description |

|---|---|---|---|---|

file |

string | The plot base name, storing in |

||

data |

Array | Vector | function | string | The 1D data.

|

||

length |

uint | The data length, if given as an array or density function. |

||

hsize |

uint |

<optional> |

0

|

The histogram size, automatically adjusted if |

model |

bool | If true also draw the best model adjustment. |

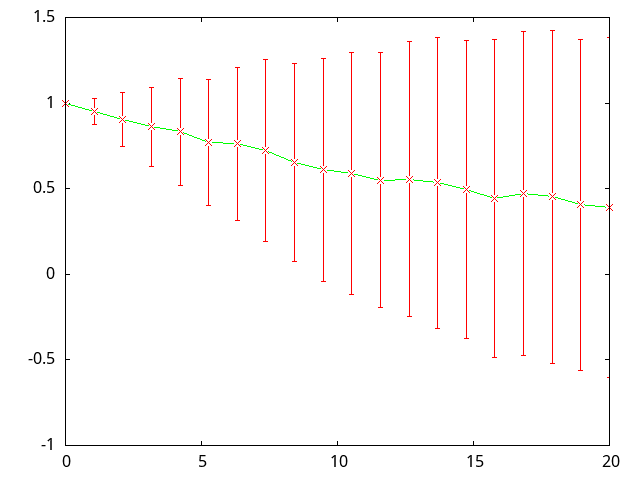

(static) plotStatCurve(file, A, x0, x1)

- Description:

Plots means and standard deviations as a curve, as exemplified here:

Parameters:

| Name | Type | Description |

|---|---|---|

file |

string | The plot base name, storing in |

A |

Array |

|

x0 |

double | The abcissa of histograms[0]. |

x1 |

double | The abcissa of histograms[histograms.size()-1]. |

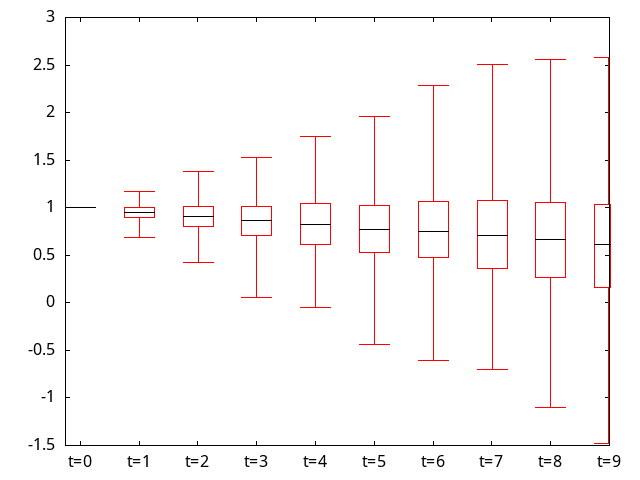

(static) plotStatBoxes(file, An)

- Description:

Plots means and standard deviations as statistical boxes, as exemplified here:

Parameters:

| Name | Type | Description |

|---|---|---|

file |

string | The plot base name, storing in |

An |

Array |

|